How to do Monitoring in the Kubernetes era?

What is Kubernetes?

Kubernetes is a powerful system that has been launched by Google for the management of containerized applications running in a clustered environment. It makes the management easier by deploying and scaling the containers that run service-oriented applications. The development of Kubernetes has been supported by a number of renowned partners, including CoreOS, VMWare, Meteor, Red Hat and some others.

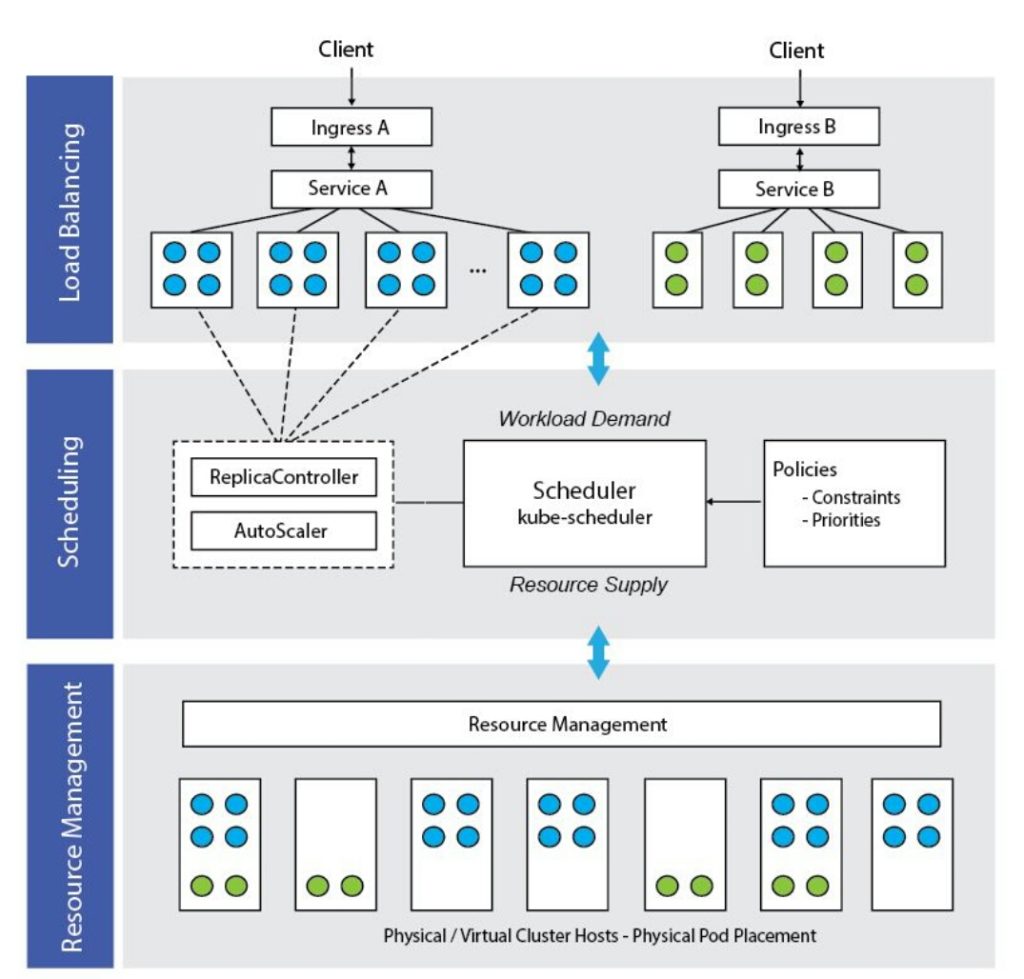

Kubernetes manages the containers by creating, starting, stopping and destroying them automatically so that the applications can run seamlessly and ensure optimal performance. The containerized applications are distributed across the groups of nodes and are orchestrated by:

- Automating the deployment of the containers

- Scaling the containers in or out, on the fly

- Providing load balancing between the containers by organizing them in groups

- Rolling out the new application container versions

- Providing container flexibility

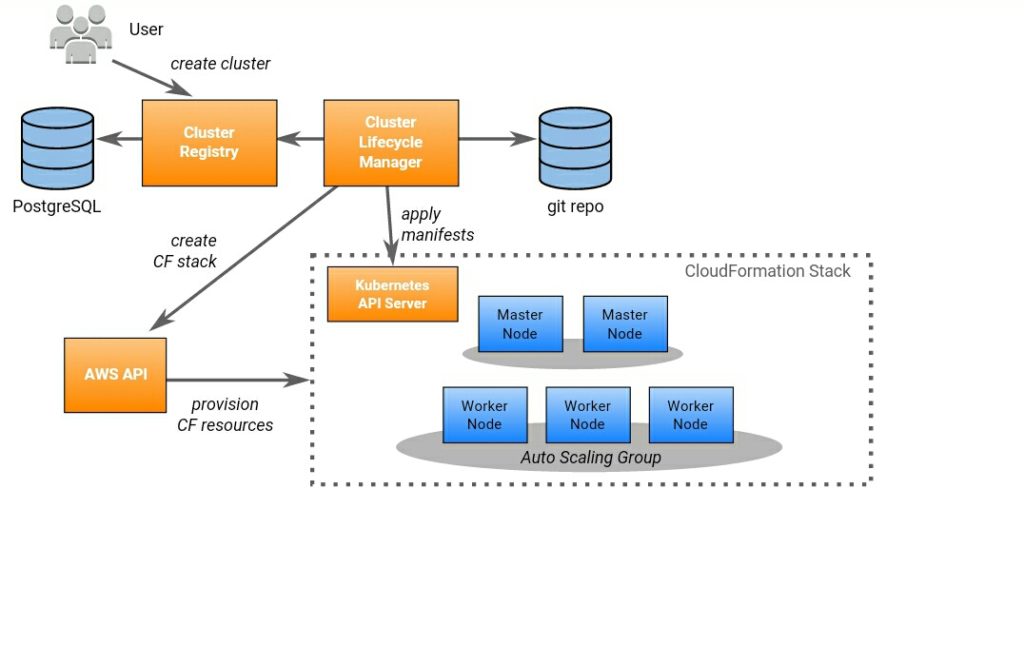

No matter whether your containers run in AWS, Azure, Google Cloud Platform or in a hybrid or on-premise infrastructure, Kubernetes can deploy them effectively.

Working of Kubernetes

Though containers are used for the application deployment, the workloads which define the types of work are particular to Kubernetes. Here is how the Kubernetes works behind the scenes.

- Pods

A pod is the smallest unit in the Kubernetes that you can create and deploy. Pods are basically of two types:

- Pods that consist of a single container

In this, the pod acts as a wrapper of the container and rather than managing the containers, Kubernetes manages the pods directly.

2. Pods that contain more than one container

In this, the co-located containers (which need to share resources) are tightly coupled in a pod that wraps them along with the storage resources as a single manageable identity.

Each pod runs a single instance of an application. In the case you want to scale your application horizontally, you need to use multiple pods, one pod for each instance. In Kubernetes model, it is known as “replication”. And these replicated pods are created and managed as a group by a controller.

- Nodes and clusters

Nodes are the virtual or physical machines which are grouped into the clusters. And every cluster has at least one master. However, it is better to have more than one master as it will ensure high availability.

Pods run on nodes and each node has one kubelet. This kubelet makes sure that all the containers in the PodSpec are running efficiently.

Also, in Kubernetes, there can be multiple virtual clusters backed by physical clusters, which are termed as namespaces. Thus, you can spin up a cluster and use its resources for different environments. This will help in saving resources, time and cost.

- Replica sets and deployments

Replicated sets are the work units that create and destroy pods. Replica sets make sure that the replicas (pods) keep on running all the times and thus, preserve the continuity. In the case, the pods are terminated or failed, the replica set will replace them automatically.

And for the management of replica set, Kubernetes introduced deployments. You just need to describe a state in the deployment object and it will handle the replica sets.

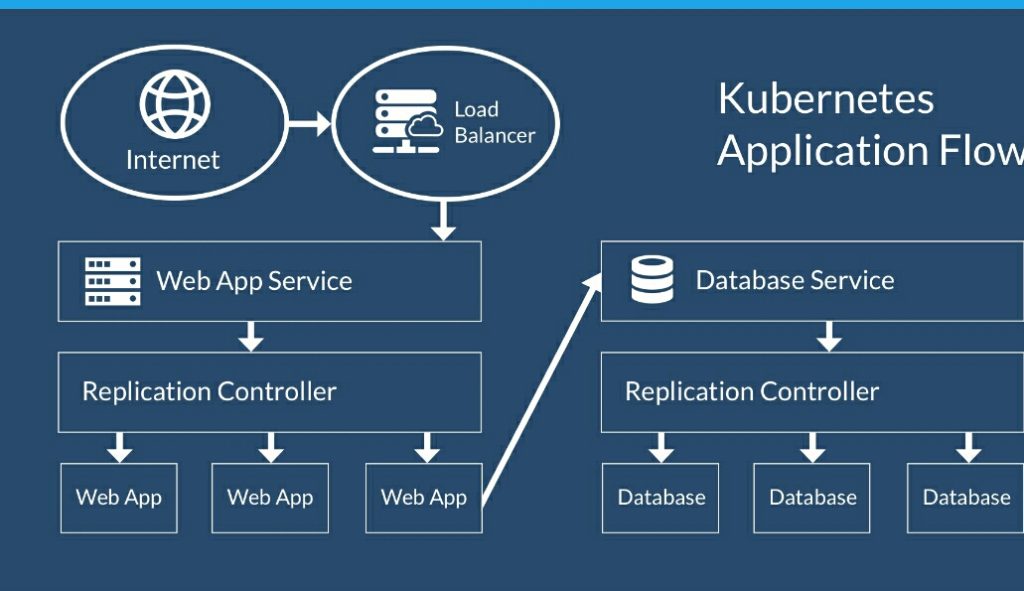

- Services

As pods are constantly created and destroyed, their IP addresses do not remain stable and reliable. The communication between pods cannot be carried out by using these addresses. This is where services play their role. A service acts as an endpoint and exposes a stable IP address for a set of pods.

How Kubernetes monitoring differs?

While monitoring Kubernetes, you need to rethink and reorient your strategies because monitoring Kubernetes differs from monitoring the physical machines as well as the traditional hosts, like VMs. Let’s make you know about these differences.

- Tags and labels have become essential

Previously, it was not possible to know where your applications are running but now, it has been made possible by the tags and labels. They have become the only way to identify the containers and the pods. Actually, they have made it possible for you to look at every aspect of the containerized infrastructure.

- More components are available for monitoring

In the previous infrastructure, there were only two layers that needed monitoring- applications and the hosts which run them. Now, the containers and Kubernetes also need to be monitored and collect metrics from.

- Applications move among the containers

Due to the dynamical scheduling of the applications, you always don’t get to know where your applications are running but they still need to be monitored. And for that, you will need a monitoring system that will collect the metrics and will help in the interruption-free monitoring of the applications.

Though you need to think again and change your approach while monitoring Kubernetes, you can easily keep the infrastructure well-orchestrated if you are clear that what you have to observe, how you can track it and how to interpret the data.